- Kick (an Australian-owned live-streaming service)

- Snapchat

- Threads

- TikTok

- Twitch

- X (formerly Twitter)

- YouTube (for logged-in features)

The list is subject to change. Both supporters and detractors of the legislation have noted that two popular apps targeting Gen Z, lemon8 (a TikTok alternative) and Yope (a photo-sharing app) are not on the banned list.

If you’re on TikTok and you’ve been wondering why it would allow its influencers to push the rival lemon8 so aggressively in recent weeks, that’s because they’re not really competitors – they share the same parent company, ByteDance.

Critics say it could turn into a game of Whac-a-Mole.

What are examples of sites and services still open to all?

Inman Grant also highlighted a list of sites not banned to under-16s. It’s heavy on messaging services and gaming platforms. Meta finds itself in the position of having three of its platforms age-restricted (Facebook, Instagram and Threads) but not its closely intertwined Messenger and WhatsApp.

Sites that remain unrestricted include:

- Discord

- GitHub

- Google Classroom

- Lego Play

- Messenger

- Roblox

- Steam and Steam Chat

- YouTube Kids

Can under-16s still watch YouTube?

Yes,

The under-16 restriction applies to logged-in accounts. So if it’s a teacher logged into their YouTube account (or their school’s), they can still show pupils educational content hosted by the Google-owned service.

If someone wants to create a new YouTube account, or log on (to access extra features including uploading content), they weill need to pass an age assurance test.

When did the social media ban for under-16s pass?

The Australian Government passed the Online Safety Amendment (Social Media Minimum Age) Bill 2024 (SMMA) on November 28, 2024.

Who supported it?

The bill, championed by a bipartisan group of MPs from different parties, passed 103 to 13, with the Greens the only party to oppose it (joined by a handful of independents).

When does it come into force?

Today, December 10, 2025.

Why a year-long delay?

It was to give the eSafety Commissioner, the regulator responsible for wrangling the U16 ban, time to work out the logistics – including which services would be age-restricted (see below) and run trials on the effectiveness of various age-detection technologies, including those that use a webcam or smartphone cam to access facial features and movement.

In mid-June, various technologies were deemed not perfect but good enough.

Did the commissioner settle on an age-detection test?

No, the exercise was more to prove that, in her view, age-detection technologies are workable and accurate.

From today, it will be up to age-restricted social media platforms to introduce the age-assurance measures of their choosing to meet the new law’s requirement that they “take reasonable steps to prevent Australians under 16 years old from having accounts”.

What happens if the social media platforms fail to take ‘reasonable steps’ to block under-16s?

They can be fined up to A$49.5m.

But what constitutes ‘reasonable steps’?

As with much major legislation on both sides of the Tasman, it will probably require a test case in the courts to resolve that point.

It will be one of the many elements watched closely on this side of the Tasman.

There have already been stirrings in the opposite direction, with the Australian Financial Review reporting that Reddit is preparing a High Court challenge to the law on the basis the ban restricts “teenagers’ implied right of freedom of political communication”.

The Nasdaq-listed Reddit has yet to comment. It has not made a market filing about any action.

Why not just use a passport or driver’s licence to verify identity?

The UK Government recently introduced a measure requiring certain age-restricted sites, including porn sites, to verify age by asking users to upload a passport, driver licence or other Government ID (a move that’s seen many wanting to access adult content turning to VPN or virtual private network software to make it seem to a site that they’re accessing it from outside the UK).

Australia’s new law expressly prohibits the use of Government ID to verify age, however. Privacy concerns were cited.

What’s the penalty for children or parents who break the new law?

Nothing.

Kids go for broke trying to create a new account with a fake age, without legal consequences (although it could complicate their online accounts in adult life). Some parents who object to the new law as heavy-handed and impractical, like tech commentator Trevor Long, are even actively highlighting shenanigans.

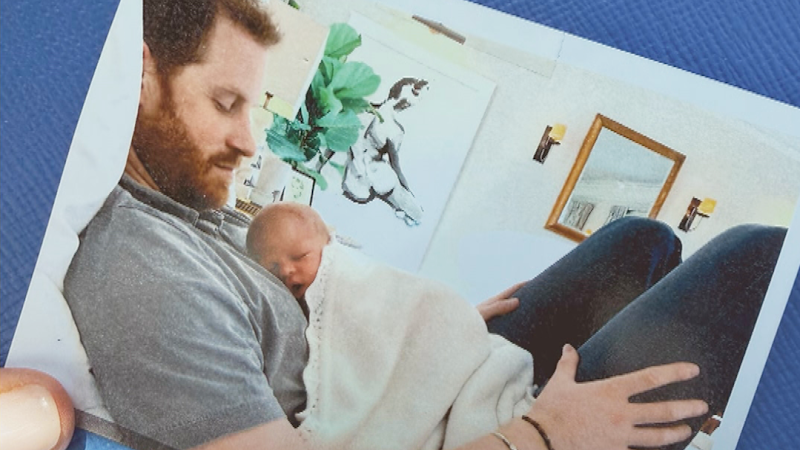

Long posted a TikTok of his daughter, whom he said had just turned 15, passing Snapchat’s over-16 age verification test, and Instagram’s – see the TikTok clip above. (While opposing the legislation, the major platforms have also been moving to weed out their under-16 users since December 4).

“Cool” parents will be able to create family accounts that in reality are primarily for an under-16 user, without blowback.

Is it okay to not be 100% effective?

“From the beginning, we’ve acknowledged this process won’t be 100% perfect,” Australian Prime Minister Anthony Albanese said on Sunday.

“But the message this law sends will be 100%. For example, Australia sets the legal drinking age at 18 because our society recognises the benefits to the individual and the community of such an approach. The fact that teenagers occasionally find a way to have a drink doesn’t diminish the value of having a clear, national standard.”

Can there be a new social norm?

The PM pitched the new law as a way for parents to push back against peer pressure.

“You don’t have to worry that by stopping your child using social media, you’re somehow making them the odd one out,” Albanese said.

“Now, instead of trying to set a ‘family rule’, you can point to a national ban.”

Similarly, Cecilia Robinson, co-chairwoman of B416 – a group that thinks New Zealand should follow suit – told Herald NOW (see clip above) that she expects “teething issues” with the new Aussie legislation but that:

“The key thing we’re really looking for is social norming. When we’re talking about introducing a minimum age for young people on social media, what we’re really saying is we need to create new social norms, and not set an expectation that people should be able to access unlimited social media from an early age.

“We will see a generation of young people who go through the change of having access to not having access. But the next young people coming through will be normalised to the fact they never did.”

What’s happening in NZ?

As things stand, the next generation coming through in New Zealand won’t be normalised to a social media-free world.

Our Government has not introduced legislation for an under-16 ban.

In May, National MP Catherine Wedd put forward a private member’s bill for a U16 social media ban.

Prime Minister Christopher Luxon supported Wedd to the degree that he appeared with her at a press conference where she outlined her bill – but his Government did not endorse it (meaning it will sit on the sidelines, possibly forever, unless drawn in a ballot of various private member bills).

Luxon did, however, announce that the education and workforce select committee would hold an “Inquiry into harm young New Zealanders encounter online”. The committee heard submissions through to July but has yet to report back.

One wrinkle: several MPs with coalition partner Act have made sceptical comments about an under-16 ban, including leader David Seymour, who called Wedd’s bill “simple, neat and wrong”.

Postscript: Going beyond an under-16 ban

Citing stats collected by Youthline and others, B416 says social media has sparked a youth “mental health crisis”, particularly from the point that smartphones went mobile, with the social media firms too slow to introduce voluntary controls.

(In September, Meta introduced Youth Accounts for Facebook and Messenger users in New Zealand, following a similar move across the Tasman, and similar controls were introduced for Instagram. Protections include all under-16 accounts being automatically set to private. Meta said measures were underpinned by complex systems that had taken a long time to build. The measures were not being implemented in reaction to political developments, a spokeswoman said.).

B416 co-chairwoman Anna Curzon told the Herald she sees Luxon’s Government as potentially open to an under-16 social media ban, particularly as last year it implemented a cellphone-in-schools ban (by tweaking an existing law).

But Curzon said her group also wants the Government to address AI chatbots, which she sees as emerging as an equal threat to youth mental health – but one that is almost entirely unregulated.

B416 also wants a fully Crown-funded regulator to be established, like Australia’s eSafety Commissioner – as opposed to Netsafe here, which is primarily funded by contracts with the Ministry of Education and the Ministry of Justice, but also receives money from social media platforms.

Netsafe has opposed an under-16 ban, with its chief executive Brent Carey arguing social media is the only vehicle for some teens to find support in a debate he regards as often ignoring the youth perspective.

Netsafe supporters, including retired District Court Judge David Harvey, say it’s important that Netsafe – as the agency responsible for dealing with complaints under the Harmful Digital Communications Act 2014 – has a degree of independence from the Government as it weighs privacy versus free speech issues.

Chris Keall is an Auckland-based member of the Herald’s business team. He joined the Herald in 2018 and is the technology editor and a senior business writer.